This is a framework/axis that I've been thinking about a lot this year. But In order to discuss this, I want to start by setting a few key definitions that will help you understand what I am specifically referring to.

Exploration: I am defininf exploration in broad terms as any activity or preoccupation that increases wellbeing (internally) through positive experience.

State A (the mind at baseline) —> State B (the mind when actively exploring).

Anything in the world that leads to internal well-being can be defined as “exploration”. For example, going on a walk on a bright and sunny day, that is exploration. Within that walk, you have explored an internal space and converted from one state of being, which might be your baseline range to another, more positive one. You can broadly define “positive” here as a more “more dopamine in the nervous system” if you would like, but to be honest, that level of detail is not necessary for this thought-experiment.

Exploitation: I would define this as any activity in which one person is able to go from one state of being to a more positive one. So the same switch from point A to point B but in this situation, this happens mostly because of another external agent. This could be loosely defined as “passive exploration”, because one does not need to be an active participant.

State A (person A at baseline) —> Individual B creates a positive experience —> State B (the mind of person A when passively exploring this newly created experience).

In other words, exploitation simply means using an external agent to achieve a similar level of positive experience. And through a purely selfish lens, that's more efficient than just exploring because of the reduced cost of entry into said experience.

This is the trade-off that I want to talk about here because many spend little to no time thinking about the specific ways in which their “wellness-seeking utility” is often exploitative. This balancing effect naturally compounds within all the daily decisions most people make, and can create hidden asymmetries that have significant ethical implications when taken to their extremes.

When I was in college, a lot of my professors asked me what my interests were. And based on my interests, they directed me to mentors who they think could help me along the way. What I didn't know then, is that a lot of this help would also look a lot like pure exploitation. Like many other young people, I worked for years without pay, purely for experience and the academic accolades to follow. But my work was of high quality and it undoutbedly supported my mentors in many ways within their intellectual explorations. Granted when you are young, sharp, and upwardly mobile, this might not be so much of a problem. But in later years of life, many who have come through these same ranks have spoken against this ritual (of progessively lowering levels of exploitation over time).

This natural trade-off also occurs in labor distribution, computation, and automation. I've recently become extremely interested in the field of artificial intelligence and some of what that could mean for my field of work, which is healthcare. For example, if you could create a new AI model that could automatically detect conversations and create notes for medical documentation without the need for a physician to document themselves, that would be a great idea, right? However, when you think deeply about that, you might realize that, yes, this technology would create a positive effect for the physician (less time wasted on the screen) and even the patient too (more facetime). But who or what are you also exploiting in this process? Let's think about it.

Is the added benefit of more facetime with your doctor worth the potential exploitation of your entire conversation being recorded and transcribed into a central database by an external agent? Who has access to the model’s structure and code, can they use it for the wrong reasons? Can the database be hacked? Who is liable if a mistake is made in the speech recognition or transcription process? What about patient confidentiality?

Another potentially exploitated party in this example of the “AI scribe” would be, well, the human scribe, people that are getting paid to document clinical encounters, and then also the people on the back-end that work to maintain the current systems of documentation, all the translators, billing experts, etc. AI people will just paint an idealistic picture out of the fact that this work “displacement” process is for the better of these individuals since they can also now go explore other things. But this should never hide the fact that James losing his medical billing job for the benefit of a better user experience for Jim or more efficiency for John is a form of exploitation from his vantage point.

This technocratic ‘eternal progress’ mentality is often out-out-touch with the lives of everyday people and motivated by the selfish imperative of profit maximization that can hide the plain fact that more technology is not always a net-positive. It is this same vein of questionable ethics layered with technical innovation that creates gigantic value bubbles that come crashing down the moment those being exploited revolt against those who are exploiting them, under the guise of “exploring a new frontier”. Think Luna and Do Kwon.

In summary, there are important trade-offs to be found within everything and we are all ethically obligated to ponder these trade-offs.

Personally, I find this lens helpful, especially for some of the ideas I see coming into our horizon, crypto, AI, the world of network states, decentralization, and all the like. Because the benefits to these ideas are always asymmetrical and the trade-offs hidden behind technicalities or buzzwords. You should evaluate all these ideas by asking yourself: how do my actions, enabled by this innovative technology (leverage) stand to affect others? How is my exploration or my desire to maximize my own well-being exploit other people?

I often put these things together and see that the exploration I want is not as important as this other kind of problem that I am creating for others. When this happens, I try to realign with my values and either not engage, or make it more balanced for the humans helping me along.

I would encourage everyone who takes the time to read to also think about how this plays out in their own lives and see where they could be exploiting others inadvertently. Or on the other hand, see how they might have been exploited by others and hopefully seek more balanced ways of exploration.

Closing thoughts

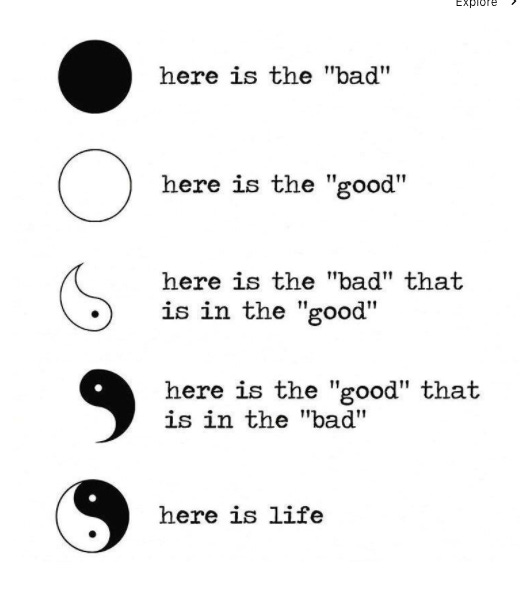

A friend of mine (thank you C.!) was kind enough to speak with me today about these ideas and pointed out their similarity to the concept of “Yin and Yang” in Eastern philosophy. It made me happy to realize that some humans already live with this framework of balance as their Universal truth.

And so I wanted to close with this,

Be well.

-TS