I wanted to write about being human in the dizzying age of transformative ML (machine learning) models and how I think our selfhood will evolve and cope with it. For our purposes, I would define “transformative models” as those that achieve, or exceed human-level benchmarks in narrow fields (Alphafold, Alphazero, DallE, GPTx, whisper etc).

Starting with definitions:

Selfhood: /ˈselfˌho͝od/

The quality that constitutes one's individuality; the state of having an individual identity.

If you're like me, I'm sure AI news have taken over your newsfeed and timelines. Increasingly, I’ve been really caught up in thinking about the potential of this technology and what it might mean for the rest of us humans as we continue to strive towards wellness, belonging and safety. Much has been made of the economic potential of ML as a new form of leverage, or better yet, its ability to ‘replace’ humans so I will not be writing about that.

Instead, I would like to shine a light on a unique second-order effect of this development. The best way to realize this is to ask yourself two questions.

What are the things that AI is going to do to the human psyche?

How are humans going to cope with increasing obsolescence?

I think everyone has been asking the opposite of these questions, which is how is this technical breakthrough going to change how we do things and benefit us. But people are not asking the question of what advances in artificial intelligence say about human intelligence and human worth. This is a bold and ambitious topic so I will split it into three separate parts to illustrate my point (re: why these 2 questions are critical).

Part I - Cognitive Intelligence versus Embodied Intelligence.

Many people thought that the AI paradigm would mean that blue-collar jobs would be automated first, and then white-collar jobs (knowledge economy). In a simple logical progression of analyzing increasing complexity in the world, this made intuitive sense. However, this assumption has been proven to be completely wrong. Today, fewer people are required to achieve cognitive tasks such as writing code (co-pilot, GPT-3) and designing digital products (Dall-e, SD). But the same (or more) demand exists for human labor within the realm of physical maintenance problems (fixing railroads, installing cables etc).

This means that the creators, artists, musicians, and singers of our generation who thought creativity was exclusively human are now faced with the existential dilemma. Either use these AI tools for new leverage or remain purists and risk being “left behind”. When I was an undergraduate, I remember also thinking that creativity was something that would never be automated and so was always going to be valuable to cultivate. We're seeing now that transformers and deep neural networks can compile billions of patterns of human creativity to create new, emergent combinations in an iterative process that mimics ours.

Many like to focus on the fact that this is machine plagiarism or that the output is poor and inconsistent (eg. see Stable diffusion fingers, hands), but if one really understands computer scaling laws and the reversibility of current constraints, then one would realize that it is only a matter of time until these issues are fixed.

Essentially:

Things that might slow down generative AI: Data ceilings and plagiarism concerns (regulations), computing power ceiling, safety concerns.

Things that might accelerate generative AI: Capitalism and an AI race (already happening), computer scaling laws, pursuit of prestige.

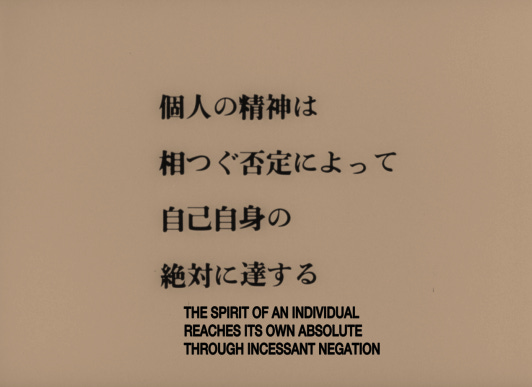

Given all these developments and the trend that we are now on, creativity and imagination might no longer be uniquely human. The cost of this sacrifice is that some humans will see their creativity and leverage catapulted. But for all connected humanity (2 billion+) we will now have to live with the knowledge that there are machines out there that can create and learn at a “human-level”, so what is the true foundation of our intrinsicly higher moral ground?

Part II - Intelligence and Enablism

Human intelligence, as proven by things like executive functioning, long-term memory, theory of mind and higher forms of cognition, has long been thought to be a unique trait of conscious individuals. Then we later discovered that animals and even plants displayed behaviors that were akin to intelligence. However, this did not stop us from feeling like we had moral superiority over animals/plants and could therefore keep exploiting them as needed.

But how will we react when a more “intelligent” agent comes into being?

Will we re-think what is valuable about us? Is it our ability to innovate? Is it our ability to feel? Is it our ability to create new explainations for natural phenomena? Physicist David Deutsch argues that humans are unique in their ability to be a ‘universal explainer’ and that this is the source of our moral superiority. That other beings (assuming no Aliens or Higher powers exist) could not explain the laws of gravity, and thus, begin to understand what it would take to build a plane.

But how long will this truth remain self-evident?

When this theory and others like it are no longer self-evident, we will need new theories of knowledge around human worth because people who (like I once did) believe them will find it harder to derive meaning from cognitive superiority as a justification of moral worth. Further evidence of this process can be found in how some humans throughout the course of history have partly justified doing horrible things to other humans such as enslavement and war because they perceived the other as “less intelligent and capable”.*

Is intelligence really what determines our worth? Or are there things about humans that are more sacred, more important than just intelligence? Who do we appreciate and admire as a society? Who are our celebrities and thinkers? Who are our leading scientists? What is the scientific method in an AGI (Artificial general intelligence) world? Ask yourself this question because it will become increasingly relevant to you and to others.

* using the same race pseudoscience we still see used today.

Part III - Reflections on Proposed solutions

When faced with this kind of philosophical question of redefining humanity in the age of AGI, most people in charge (i.e. engineers building these breakthroughs) start hand-waving about the promise of UBI (we’re not even close to this here in the US) and the potential good of a post-scarcity world.

These theories have lots of holes. For example, they fail to account for a sizable percentage of the human population which is still off the grid (no electricity, no internet) and will have unequal representation in training sets and unequal penetration of downstream productivity benefits. Even in the mainstream US, no one seems to talk about how AI benefits disenfranchized people at the margins (no effect on infrastructure, etc). This is partly because of the Blue-White collar asymmetry that exists today in AI and Robotics (also funding issues), which is reflected in the use cases selected.

Another glaring issue is that the dominant ML models are still closed to the public and owned by for-profit organizations. This creates a third prescient issue, which is how will governments institute UBI without a strong tax-payer base. If only certain corporations/governments and those who attain hyper-efficiency can still make money, why should they willingly accept the burden of paying for everyone to live with dignity? Why would they follow the same tax laws in a country that they have now outgrown because humans have become obsolete? The real problem with AGI is that it actively discourages any other pursuits of deferred gratification.

Assuming that everyone will equally partake in the ‘spoils’ of AGI or that people will still want to go to school to become lawyers, civil engineers and doctors in an AGI world is magical thinking. It is also inconsistent with history and prior technological breakthroughs. AGI will lead to even bigger inequality as some use it to gain more power and resources, governments will then get involved and it will get ugly. But across the board, all of us will feel less intrinsically valuable, and our species will be at considerable risk.

This is the issue with arms races, no one is accounting for second-order effects. If the way we are currently developing ML models (attain sufficient performance, publish model + recall if UX too funky) was the same way we developed our drugs (Phase 1-4 trials + active surveillance), most people would be deeply alarmed. I have read about some AI developers, even those with neuroscience, ethics or philosophy backgrounds who think AGI is a net moral good without much pushback or evaluation (except in a few rare cases). My personal belief is that narrow AI is probably good but that is where I draw my line. Anyone proposing a basic shift in the way we understand our world and our ability to earn a living (AGI) should always account for the downstream effects of that shift.

Lastly, AGI or other ‘close-to-conscious’ ML models will be created purely for our own good and thus will suffer from our exploitation (lack of agency). Signs of very deep neural networks (>10s billions of params) displaying distress during use are already here (see reddit r/bingAI). Our understanding of ethics and theories of what it means to harm non-humans needs to grow fast in this area but as I have written about in prior blogs, I remain skeptical.

Conclusions: Futurism and the world of Hyperobjects

I am against AGI and other accelerationists, not because of a fear of impending death or immediate extinction through AGI ‘taking over’ as some have proposed but because as societies go, we just aren't equipped to cope with these kinds of hyperobjects.

“Hyperobject”; an agglomeration of networked interactions with the potential to produce inescapable shifts in the very conditions of existence.

Throughout the course of history, we see that hyperobjects drag on with much malaise, division, and unease until a societal-level breaking point occurs.

This is our path right now with developing AI, we are running towards a new form of hyperobject that will narrow the definition of being human in ways that very few people can fully grasp.

Unless we want the WALL-E world of disembodied beings to prevail, we need cooler heads to prevail.

References and further reading

1- Hybriditiy and disembodied subjectivity in the posthuman age.

2- Hyperobjects